- Login

- Search

- Contact Us

-

Have a question? Our team is here to help guide you on your automation journey.

-

Explore support plans designed to match your business requirements.

-

How can we help you?

-

- AI

AI Without the Hype From pilot to full deployment, our experts partner with you to ensure real, repeatable results. Get Started

- Automation Anywhere AI

-

- Solutions

Featured Agentic Solutions

Accounts Payable Invoice automation—No setup. No code. Just results. Accounts Payable

Customer Onboarding Scale KYC/AML workflows. Customer Onboarding

Customer Support Keep queues moving, even at peak load. Customer Support

Healthcare RCM Revenue cycle management that runs itself. Healthcare RCM

- Products

Platform Features

- Agentic process automation (APA)

- Robotic Process Automation (RPA)

- View all Products

-

- Resources

Get Community Edition: Start automating instantly with FREE access to full-featured automation with Cloud Community Edition.

Featured

Named a 2025 Gartner® Magic Quadrant™ Leader for RPA.Recognized as a Leader for the Seventh Year in a Row Download report Download report

Named a 2025 Gartner® Magic Quadrant™ Leader for RPA.Recognized as a Leader for the Seventh Year in a Row Download report Download report- Become an Expert

- Developer Tools

- Get Support

- View all resources

-

- Partners

Find an Automation Anywhere Partner Explore our global network of trusted partners to support your Automation journey Find a Partner Find a Partner

- Find a Partner

- For Partners

-

- Imagine 2026

Event

Get ready for Imagine 2026

From agentic AI to end‑to‑end automation, be a part of the flagship event where our community gathers to build, learn, and lead. Register today

Countdown

In this article

- Intelligence everywhere, outcomes nowhere

- The expectation vs. reality gap

- Why reliability breaks before scale

- The error cascade problem

- Lesson one: More AI ≠ more outcomes

- Lesson two: Reliability requires system design

- Lesson three: Governance is not optional

- Choosing intentional design over accidental chaos

- FAQs

- Intelligence everywhere, outcomes nowhere

- The expectation vs. reality gap

- Why reliability breaks before scale

- The error cascade problem

- Lesson one: More AI ≠ more outcomes

- Lesson two: Reliability requires system design

- Lesson three: Governance is not optional

- Choosing intentional design over accidental chaos

- FAQs

Enterprise AI has crossed a threshold. Nearly every organization is experimenting with copilots, assistants, or autonomous agents, often across multiple departments at once. On paper, adoption has never been higher. In practice, outcomes have rarely been more elusive, as agentic chaos begins to take hold.

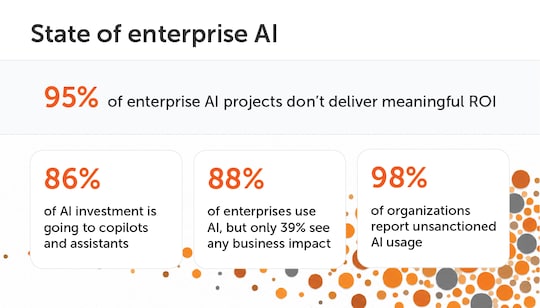

The numbers are stark. MIT research shows that 95% of enterprise AI pilots never deliver measurable return on investment, despite significant spend and executive focus. McKinsey reports that while 88% of organizations now use AI, only 39% see any EBIT impact — most of it under 5%.

AI is everywhere. Business results are not.

A familiar scene: Agentic chaos inside the enterprise

Consider a common scenario playing out across large organizations today.

Customer support teams deploy AI agents to resolve cases faster. Finance adopts copilots to speed up reconciliations. Sales operations introduces assistants to draft proposals and summarize accounts. Each deployment works locally. Cycle times drop. Teams feel more productive.

But zoom out, and something breaks.

Cases still stall waiting on downstream approvals. Errors creep in when data moves between systems. Costs rise as exceptions pile up. Leaders struggle to explain why productivity gains aren’t showing up in throughput, margins, or customer experience.

Nothing is “wrong” with the AI. What’s wrong is the system.

This is agentic chaos: intelligence spreading faster than structure, coordination, and governance.

Intelligence everywhere, outcomes nowhere

The core problem is deceptively simple. Personal productivity does not automatically translate into enterprise productivity.

AI tools are exceptional at accelerating individual tasks: writing, summarizing, researching, classifying. But most enterprise value is created through processes, not tasks. If those processes remain fragmented, accelerated work simply hits the next bottleneck faster.

Nowhere is this more visible than in the rise of copilots. According to Menlo Ventures, 86% of enterprise spending on general-purpose AI assistants flows into copilot-style tools aimed primarily at individual productivity rather than end-to-end execution.

The result is predictable: pockets of intelligence that don’t compound. A faster step inside a broken process doesn’t fix the process. It just exposes its limits more quickly.

The expectation vs. reality gap

When enterprises approved AI budgets, expectations were ambitious:

- Autonomous, end-to-end workflows

- Measurable cost and productivity gains

- Faster decision-making across systems

- Impact at scale, not isolated wins

What many organizations actually experience looks quite different:

- Disconnected copilots embedded in siloed applications

- Fragmented gains that fail to stack

- Inconsistent or hard-to-predict agent behavior

- A fast-growing shadow AI sprawl of unsanctioned tools

The governance gap is widening fast. A Varonis study found that 98% of organizations have employees using unsanctioned apps, including AI tools, often without IT visibility or controls. At that point, complexity grows faster than value.

Why reliability breaks before scale

Even organizations that move beyond pilots quickly encounter a second barrier: reliability.

AI agents are probabilistic systems. They generate highly likely outputs — not guaranteed correct ones. Performance can be uneven, succeeding in one case and failing in an adjacent one, even when tasks appear similar. Some have described this phenomenon as “jagged intelligence.”

In consumer use, this inconsistency is tolerable. In enterprise workflows, it is not. When agents classify requests, route work, extract data, or trigger transactions, a single error can have operational or financial consequences — especially when those agents are chained together.

The error cascade problem

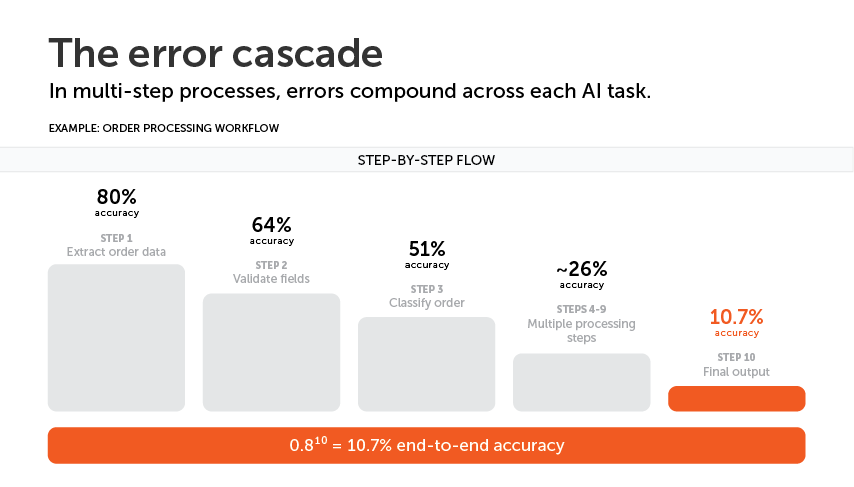

Error compounds in multi-step processes. If each task in a ten-step workflow operates at an optimistic 80% accuracy, end-to-end accuracy doesn’t stay at 80%. It collapses. With each handoff, probabilities multiply, not add. A system that looks impressive step by step becomes fragile in aggregate.

This is why many AI deployments demo well but struggle in production. The issue isn’t that the models are insufficient. It’s that probabilistic components are being asked to behave like deterministic systems. That design choice mathematically guarantees disappointment.

Lesson one: More AI ≠ more outcomes

The first lesson enterprises are learning is that AI adoption alone does not create value.

Outcomes come from processes. If intelligence isn’t intentionally engineered into an end-to-end workflow, it won’t move the metrics that matter.

Organizations seeing real impact start somewhere else. They begin with a business outcome, map the full process that produces it, and then apply enterprise AI orchestration to connect systems, workflows, and decisions end to end. Optimization shifts from isolated tasks to the system as a whole.

That’s when productivity finally reaches the bottom line.

Lesson two: Reliability requires system design

High-performing organizations don’t deploy unconstrained agents and hope for the best. They design systems that blend execution modes:

- AI handles judgment, reasoning, and adaptability

- Deterministic actions enforce correctness and repeatability

- Business context grounds decisions in real constraints

- Humans intervene only where judgment truly adds value

This isn’t about limiting AI. It’s about applying it where it excels.

When autonomy is balanced with structure, error stops compounding. Reliability improves not because models get smarter, but because the system does.

Lesson three: Governance is not optional

The final lesson is emerging now, and it’s the most dangerous to ignore. AI adoption is scaling faster than governance. Enterprises already run hundreds of applications, many disconnected. Now those applications increasingly ship with embedded agents, while employees introduce their own tools independently.

This creates shadow AI at scale. Unlike passive software, agents take actions. They move data, trigger workflows, and consume resources continuously. Without centralized visibility and control, risk grows silently until a failure forces attention.

A sprawl of software is messy.

A sprawl of autonomous agents is operationally dangerous.

Choosing intentional design over accidental chaos

Agentic chaos is not inevitable. It’s the result of intelligence spreading without system design, coordination, or governance.

The organizations converting AI ambition into durable outcomes share a common approach. They are deliberate about where intelligence shows up, how systems connect, and how autonomy is governed.

In a crowded and fast-moving AI landscape, outcome focus and strong system design aren’t constraints — they are the advantage.

You can intentionally build a reliable, unified AI ecosystem. Or you can accumulate disconnected agents and clean up the mess later. The choice is already being made, whether consciously or not.

Take a closer look at how leading organizations are moving from agentic chaos to measurable outcomes with orchestrated, governed systems.

FAQs

What is agentic chaos in an enterprise context?

Agentic chaos is what happens when AI agents are deployed across an organization without coordination, shared workflows, or governance. Individually, they may work well. But at the system level, they create fragmentation, slowing down outcomes instead of improving them.

What is the difference between agentic chaos and chaos agents?

“Chaos agents” implies that individual AI agents are unreliable or misbehaving. In reality, agentic chaos is a system-level issue. Even well-performing agents can create poor outcomes when they operate without coordination, shared workflows, or clear decision boundaries.

Why is AI adoption failing to deliver measurable business ROI?

Most AI deployments focus on improving individual tasks like writing, summarizing, and classifying rather than end-to-end processes. Without connecting those improvements across systems and workflows, gains stay isolated and don’t translate into business results.

What causes error cascades in AI workflows?

Error cascades occur when small inaccuracies compound across multi-step processes. Because AI systems are probabilistic, each step introduces some uncertainty. When those steps are chained together without controls, overall reliability drops quickly.

How does jagged intelligence affect AI agent reliability?

Jagged intelligence refers to the uneven performance of AI systems. They can succeed in one scenario and fail in a very similar one. In enterprise workflows, that inconsistency makes agents unreliable unless their use is within especially designed frameworks and platforms.

Tags

AIStay up to date:

Peter White is the Chief Product Officer at Automation Anywhere.

For Students & Developers

Start automating instantly with FREE access to full-featured automation with Cloud Community Edition.